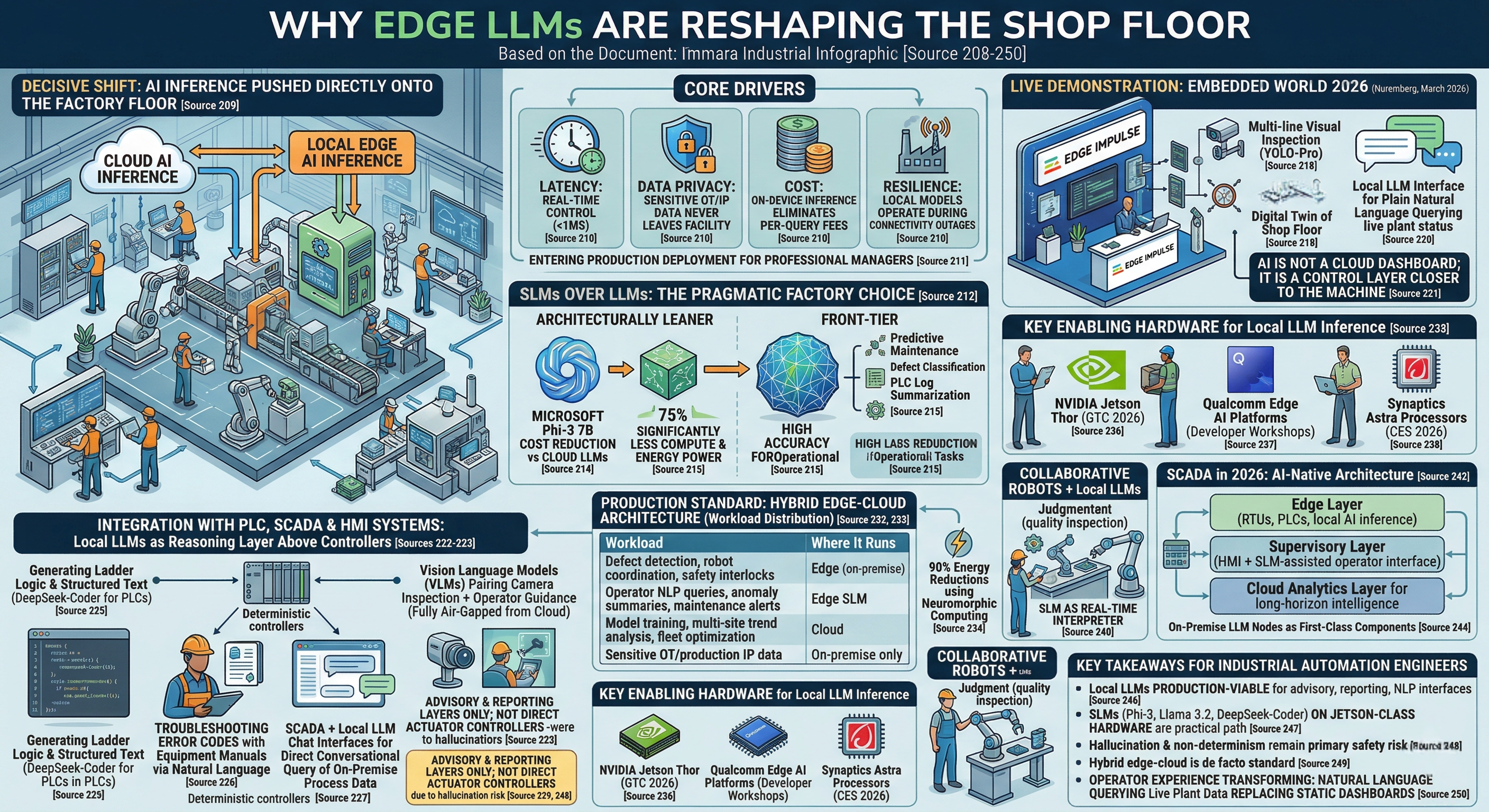

The industrial world is undergoing a decisive shift: instead of routing production data to the cloud, manufacturers are pushing AI inference directly onto the factory floor. The core drivers are latency (cloud round-trips add hundreds of milliseconds, incompatible with real-time control), data privacy (sensitive OT/IP data never leaves the facility), cost (on-device inference eliminates per-query cloud fees), and resilience (local models operate during connectivity outages). For professionals managing PLCs, SCADA, HMI, and collaborative robots, this is no longer experimental — it is entering production deployment.

SLMs Over LLMs: The Pragmatic Factory Choice

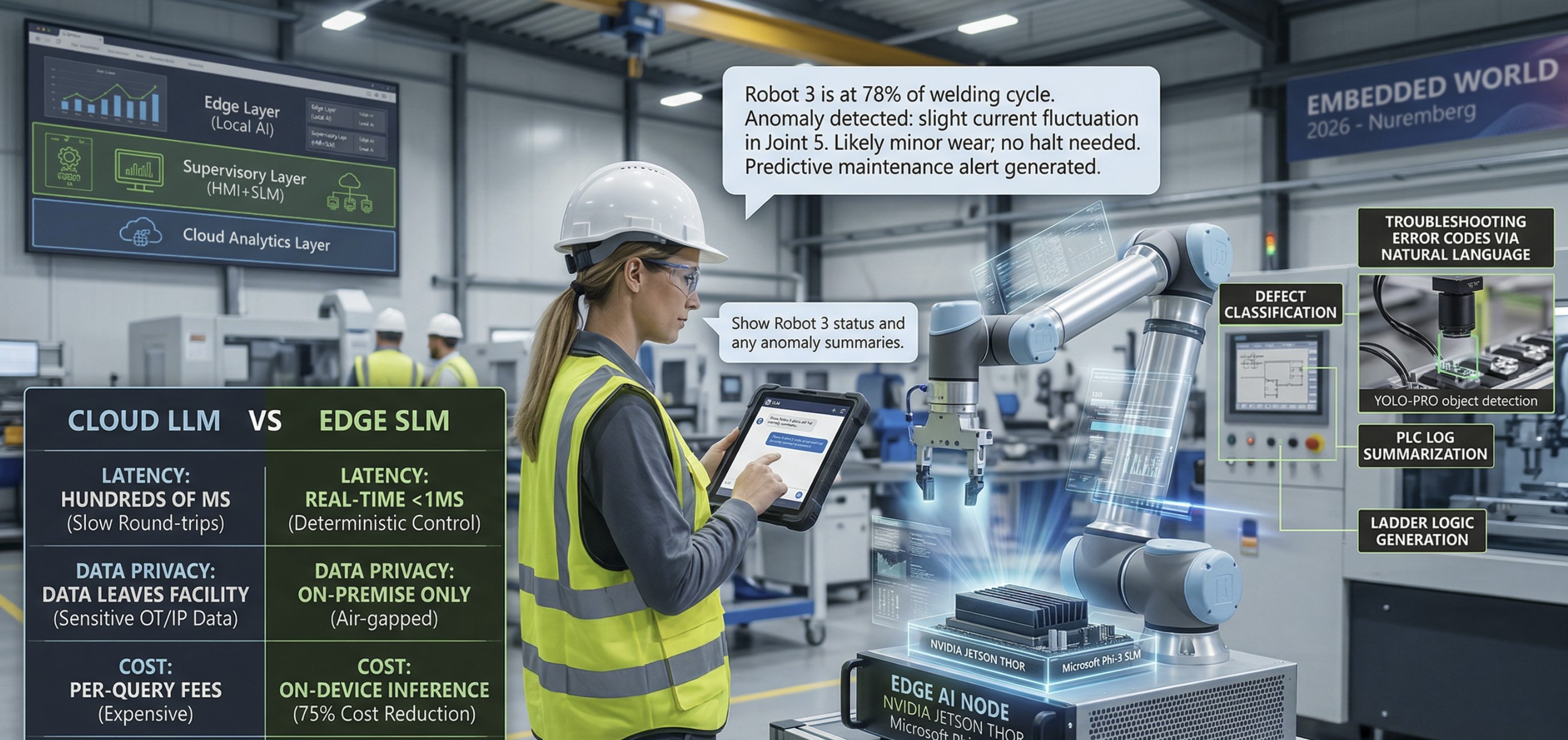

While "LLM on the edge" is the buzzword, the practical workhorse emerging in 2026 is the Small Language Model (SLM) — architecturally leaner models tuned for specific industrial tasks. Deploying models like Microsoft Phi-3 7B on NVIDIA Jetson platforms for quality inspection has already demonstrated a 75% cost reduction compared to cloud-based LLMs. SLMs require significantly less compute power and energy while delivering high accuracy for constrained operational tasks like predictive maintenance alerts, defect classification, and PLC log summarization.

Live Demonstrations: Embedded World 2026

The most compelling real-world showcase happened at Embedded World 2026 (Nuremberg, March 2026), where Edge Impulse demonstrated a full intelligent factory pipeline combining:

- Multi-line visual inspection (YOLO-Pro object detection)

- A digital twin of the shop floor

- A local LLM interface allowing operators to query live plant status in plain natural language

The key architectural insight from that demo: AI is not a cloud dashboard bolted onto production — it is a control layer sitting close to the machine, where latency and determinism matter more than headline model size.

Integration with PLC, SCADA & HMI Systems

A rapidly growing area of practical experimentation involves deploying local LLMs as a reasoning layer above existing controllers — rather than replacing deterministic PLC logic, the LLM handles edge cases and anomaly narration that rigid if-then ladders cannot express. Key industrial use cases being pursued right now include:

- DeepSeek-Coder deployed locally to generate and assist with ladder logic for PLCs in extrusion and material handling lines.

- Local SLMs embedded with equipment manuals so frontline workers can troubleshoot error codes via natural language, without navigating separate dashboards.

- SCADA + local LLM chat interfaces enabling direct conversational query of on-premise process data.

- Vision Language Models (VLMs) at the edge pairing camera-based inspection with language-based operator guidance — fully air-gapped from cloud.

Safety-critical concerns — hallucination risk, query latency, and non-determinism — remain the primary adoption blockers for closed-loop control, making LLMs most appropriate today as advisory and reporting layers, not direct actuator controllers.

Hybrid Edge-Cloud Architecture: The Production Standard

Smart manufacturers are not choosing between edge and cloud — they are distributing workloads strategically. Early adopters in automotive and electronics manufacturing report 90% energy reductions using neuromorphic computing for remote asset monitoring compared to traditional edge AI deployments.

Key Hardware Enabling Local LLM Inference

- NVIDIA Jetson Thor — Advantech showcased edge AI and Physical AI solutions powered by Jetson Thor at NVIDIA GTC 2026 (March 2026).

- Qualcomm edge AI platforms — Deep-dive developer workshops running in March 2026 focus on deploying optimized AI models directly into industrial device workflows.

- Synaptics Astra processors — Demonstrated at CES 2026 for on-device inferencing in predictive maintenance, safety monitoring, and robotic automation.

Collaborative Robots + Local LLMs

In the cobot space, local SLMs are enabling a new human-robot interaction model: humans handle judgment-driven tasks (quality inspection, exception handling) while cobots take repetitive physical tasks, with the SLM acting as the real-time interpreter between sensor telemetry and operator language. Flexxbotics released its software-defined automation platform for free in January 2026, targeting exactly this integration layer between robots, PLCs, and AI reasoning.

SCADA in 2026: AI-Native Architecture

Modern SCADA architecture as of 2026 is evolving into a three-tier structure: an Edge Layer (RTUs, PLCs, local AI inference), a Supervisory Layer (HMI + SLM-assisted operator interfaces), and a Cloud Analytics Layer for long-horizon intelligence. The MES-SCADA-PLC-IIoT integration stack is increasingly designed with on-premise LLM nodes as first-class components, not afterthoughts, especially for manufacturers handling sensitive production IP or operating in air-gapped environments.

Key Takeaways for Industrial Automation Engineers

- Local LLMs are production-viable today for advisory, reporting, and NLP operator interfaces — not yet for closed-loop safety-critical control.

- SLMs (Phi-3, Llama 3.2, DeepSeek-Coder) on Jetson-class hardware are the practical deployment path, not frontier cloud models.

- Hallucination and non-determinism remain the key safety risk; architectural separation from actuator control loops is essential.

- Hybrid edge-cloud is the de facto standard; on-premise handles real-time inference, cloud handles training and fleet analytics.

- The operator experience is transforming: natural language querying of live plant data is replacing static HMI dashboards.

About the Author

Nay Linn Aung is a Senior Automation & Robotics Engineer with 12+ years in PLC, SCADA, collaborative robotics, and AI/ML. Currently pursuing an M.S. in Computer Science (Data Science & AI) at Hood College.